Last Updated on 9th December 2021

Many of us, including young people, use WhatsApp daily. It’s established itself as a messaging giant in the world of social media.

But there are more names arriving on the scene which could put more pressure on WhatsApp as it battles to defend its privacy policy due to a recent backlash.

It concerns how the Facebook-owned company uses data collected about each of the apps’ users.

©whatsappbrand.com

Privacy and user terms are being updated with anyone who doesn’t accept them having to leave the platform. The idea is that Facebook will then be able to inform personalised advertising on all of its platforms, including Instagram.

The recent frenzy has meant millions of people have switched to alternative services such as Signal and Telegram.

Here’s what we know so far…

What is changing?

What will the data be used for?

The data shared will be for three new features:

What has been WhatsApp’s response?

So why have people suddenly moved to other services?

Is Telegram just as safe?

©signal.org

What does this all mean for privacy and children?

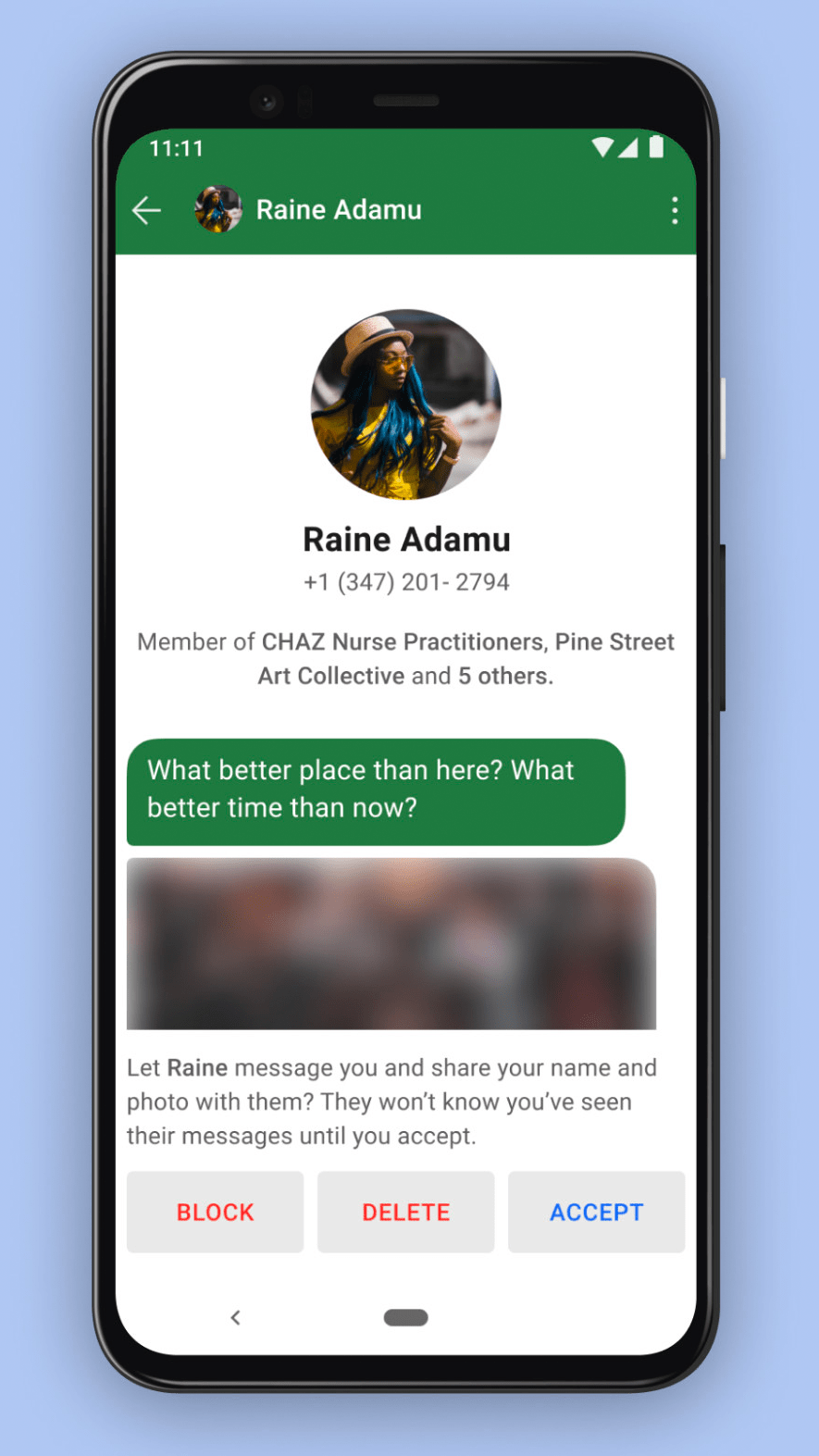

Currently, WhatsApp rules state that all users must be over the age of 16. There is however no enforcement or adequate age verification process to control this.

The fact that messages on WhatsApp are encrypted does still pose potential risks. It can make it more difficult to catch and prosecute online child sex offenders as messages can’t always be deciphered.

The new update from WhatsApp means that data will be shared with Facebook for marketing purposes to drive personalised advertising to users if they live outside the European Union – that currently includes the UK.

However, because of Brexit, Facebook services in the UK will be transferring from Facebook Ireland (Facebook EU’s base) which does leave the potential for data privacy changes. Whether this happens is yet to be confirmed.

WhatsApp has said nothing will change, although proof has yet to be published.

What should parents keep in mind?

Top Tips for Parents

©signal.org

Join our Safeguarding Hub Newsletter Network

Members of our network receive weekly updates on the trends, risks and threats to children and young people online.