Last Updated on 9th December 2021

This article originally appeared on Huffpost and can be read here.

For the ICO’s age appropriate design to work, we need better age verification, safeguarding expert Jim Gamble QPM writes.

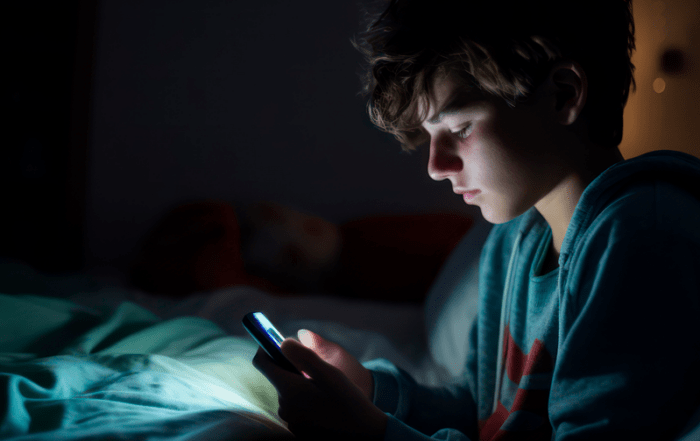

In this digital age, where parents use screens to pacify, distract and engage their toddlers, it’s no surprise that children and young people pester parents for earlier access to devices of their own. They are going online ‘solo’, earlier than ever before.

Smartphones and tablets are therefore no longer reserved for older children. According to a recent Ofcom report, 42% of 5-7 year old’s have their own tablet. Our own experience working in schools across the UK with the Safer Schools partnership suggests that this is a conservative estimate.

Protecting children and young people from inappropriate material is not a new challenge. The top shelf of newsagents limited but didn’t always prevent the vertically challenged (small children) from reaching unsuitable content. Nor has the law always been able to stop a highly motivated youth from by-passing restrictions to access alcohol. However, online anonymity and universal accessibility is a gamechanger in virtual spaces, and for how we protect our children and young people.

“Age verification, if not the silver bullet, remains the critical gateway for protecting the health and wellbeing of the young and vulnerable.”

The ICO has recently published its finalised version of ‘Age Appropriate Design: Code of Practice for Online Services’ (first seen, in draft form last year) which aims to protect children’s privacy in online spaces. But does it go far enough?

The code will require digital services to automatically provide children with a “built-in baseline of data protection” whenever they download a new app, game or visit a website. While many of the proposals in the new Code of Conduct are well-intentioned and legal requirements for sites to assess risk on their platform are indeed, well placed – the real challenge will come from how the code is practically enforced and tested.

Age verification has been debated at length, with the government backtracking last year on its plan to prevent children and young people from accessing adult porn sites. The harsh reality was the final realisation that their tentative plans just weren’t practicably viable.

Age verification, if not the silver bullet, remains the critical gateway for protecting the health and wellbeing of the young and vulnerable. Any active attempt at age verification needs to begin by acknowledging that children (like adults) can and do lie about their age.

“No matter how good systems are, children and young people will find ways around them.”

The new code prompts digital service providers to consider in their design the age range and developmental stage of children and young people who access their platforms. Many of the most popular sites already do this. Exposure to language and advertising are constructed (and targeted) based on the age of a user on Facebook and other platforms. However, this is undermined by weak Age Verification, i.e. self-declaration.

The ICO code suggests several solutions to verify the age of users, including: Artificial Intelligence, third party Age Verification services, and ‘hard identifiers’ (passports/ID cards). The casual reader would be forgiven for thinking these functions are already widely tried and tested working solutions. The problem is that they’re not.

In the absence of agreed workable models, choice will invariably mean that the providers will take the path of least resistance and do what is easiest for them. In my experience, this generally defaults to self-declaration and or an unrealistic age classification that mitigates their responsibility but doesn’t address the problems for their undeclared underage users.

Not that long ago, while visiting a primary school with the Ineqe Safeguarding Group I spoke with a boy aged 10. Already a user of some of the popular sites whose terms and conditions required him to be 13, he explained his approach to age verification.

He signed up, not using the date of birth of a 13-year-old but as if he was 15. He explained that social media companies are very clever and that if he had said 13, that would have been too obvious a lie. The nodding heads of his peers implied that this logic was accepted.

By the time he is 13 and old enough to visit the sites he routinely frequents – the site will think he is 18. His ‘virtual self’ has aged, become an adult and is subject to and exposed to targeted advertising, language and content meant for adults.

No matter how good the systems are, children and young people will find ways around them. So, our approach to safeguarding needs to be smarter, and we can counter these problems by educating and empowering them to better protect themselves.

In school, teachers need to target the year group in which the majority of the class are due to turn 13. The classroom-based lesson should focus on the benefits of using their true age on social media platforms and getting them to commit to changing their original fake date of birth, to their true one. After all, they can now legitimately use most of the sites they already frequent. Homework on this subject involving parents or carers will help spread and reinforce this simple but powerful message.

I believe the government is moving in the right direction regarding online harms and my advice to them is simply this: Pause to consider the next steps, keep them simple and, above all else, get the basics right.

From Fun to Fear

Your Content Goes Here Your Content Goes Here Online challenges, trends or hoaxes appear frequently on social media or other online platforms. They can vary, but often encourage viewers to

Protecting Young People From Sextortion

Your Content Goes Here Your Content Goes Here ⚠️ Warning: This article contains information that readers may find distressing. ⚠️ This article gives advice to safeguarding

A Guide to Esports – More Than a Game

What is Esports? Esports (or electronic sports) is best understood as competitive-level online videogaming. Esports players compete against each other for prizes, money, and prestige. Any videogame with the potential