Last Updated on 5th April 2024

Reading Time: 5.9 mins

18th August 2023

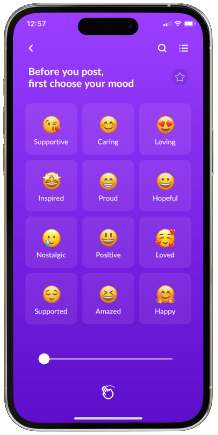

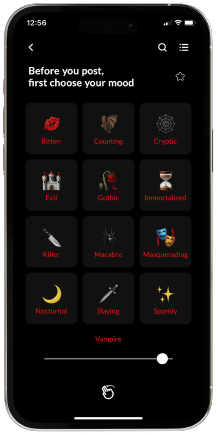

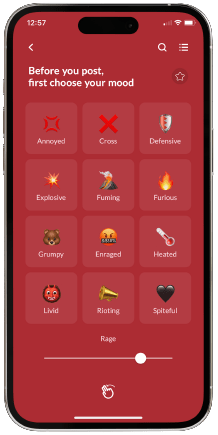

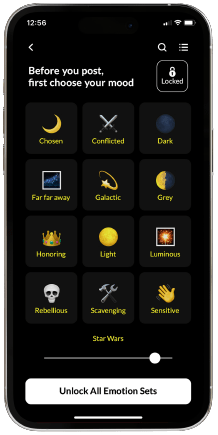

Our online safety experts have received reports from our Safer School partners about a peer support app that could be reinforcing harmful behaviour. Vent markets itself towards children and young people as a platform where they can express themselves, “chill out” and have their mood “lifted”. We have reviewed and tested this app and found that it features unhealthy and potentially dangerous behaviours, some of which are age-inappropriate or illegal.

Here’s what you need to know…

What is Vent?

Age Rating

Vent states that users can only post to the app if they are ‘aged 13 years or older’. However, other ratings suggest 16+ or 17+ age limits, as the app may include “suggestive themes” such as profanity/crude humour, mild sexual content, nudity, and drug use reference – content that is not suitable for Vent’s suggested age rating.

Our testers also noted that there is ineffective age verification on this platform. Users just need an email and a password to create an account and are only asked to tick a box that says, “I am over 16 years of age.”

What are the key functions?

‘I Need Help’

Within the user profile section, users can find an ‘I Need Help’ option. This is meant to provide anyone who is “having a really difficult time” with anonymous support from a trained provider. Vent has then included instructions for a 24/7 text line they call ‘Vent Crisis Messenger’. After testing the app, we discovered this is actually connected to Shout Helpline.

Our online safety experts reached out to Shout with concerns about the use of their helpline in the Vent app. Shout’s spokesperson then confirmed that Shout does not have any relationship with Vent, and that the signposting had been done without Shout’s knowledge or consent.

Our Advice/Top Tips

If you are worried that a child or young person in your care may be using Vent or a similar website/app, don’t panic. Our online safety experts have curated the following advice to help you support those in your care:

Join our Online Safeguarding Hub Newsletter Network

Members of our network receive weekly updates on the trends, risks and threats to children and young people online.